Can anybody with experience in fabrication reveal more about this? Very exciting ideas, but hoping to learn more in real-world context

The argument is that processing data physically “near” where the data is stored (also known as NDP, near data processing, unlike traditional architecture designs, where data is stored off-chip) is more power efficient and lower latency for a variety of reasons (interconnect complexity, pin density, lane charge rate, etc). Someone came up with a design that can do complex computations much faster than before using NDP.

Personally, I’d say traditional Computer Architecture is not going anywhere for two reasons: first, these esoteric new architecture ideas such as NDP, SIMD (probably not esoteric anymore. GPUs and vector instructions both do this), In-network processing (where your network interface does compute) are notoriously hard to work with. It takes CS MS levels of understanding of the architecture to write a program in the P4 language (which doesn’t allow loops, recursion, etc). No matter how fast your fancy new architecture is, it’s worthless if most programmers on the job market won’t be able to work with it. Second, there’re too many foundational tools and applications that rely on traditional computer architecture. Nobody is going to port their 30-year-old stable MPI program to a new architecture every 3 years. It’s just way too costly. People want to buy new hardware, install it, compile existing code, and see big numbers go up (or down, depending on which numbers)

I would say the future is where you have a mostly Von Newman machine with some of these fancy new toys (GPUs, Memory DIMMs with integrated co-processors, SmartNICs) as dedicated accelerators. Existing application code probably will not be modified. However, the underlying libraries will be able to detect these accelerators (e.g. GPUs, DMA engines, etc) and offload supported computations to them automatically to save CPU cycles and power. Think your standard memcpy() running on a dedicated data mover on the memory DIMM if your computer supports it. This way, your standard 9to5 programmer can still work like they used to and leave the fancy performance optimization stuff to a few experts.

Good, well thought out points.

I’ll add Von Newman machines are more likely to be used in mobile devices and appliances.

I seriously doubt these could be mass-produced in any meaningful way due to the rarity of the requirements. I’d love to hear a more practical argument for this though.

“2D” fab isn’t new, and correct me if I’m wrong, that is sort of how AMD got its start. It’s just the idea of fixing heat dissipation to solve for Moore’s Law, but requires novel materials that didn’t exist yet. This has cropped up in various forms for metal and silicon dynamic replacements over the decades, and I think the last big news I heard about this was 10 years ago regarding graphene being a cheap and plentiful replacement for silicon, and here we are with no proofs of concept.

It’s a paper I guess, but not anything that has the feasibility of showing up in the real world. If anything, I think these labs are working on shrinking quantum computational units down to be more useful for everyday computing, since they kind of already “work”.

Edit: also some recent news about transistor heat dissipation.

Interesting, in this particular case it’s implementing a single operation, but I can imagine they can implement other single operation dedicated chips as well. So I’d expect ASICs but no CPUs

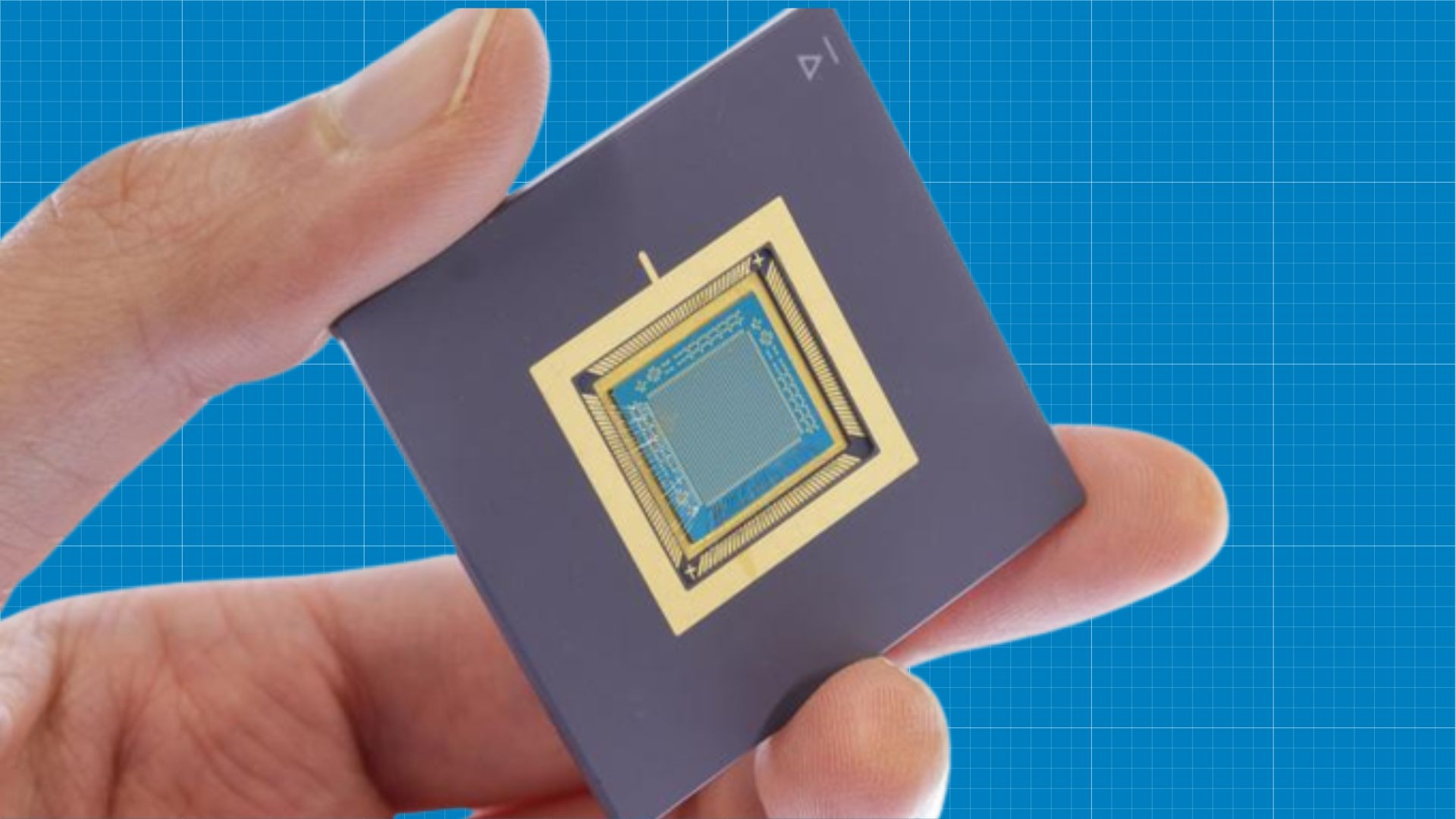

https://actu.epfl.ch/news/redefining-energy-efficiency-in-data-processing/

By setting the conductivity of each transistor, we can perform analog vector-matrix multiplication in a single step by applying voltages to our processor and measuring the output

Still, i don’t think it’ll need to get much more complex to be very useful for AI workloads.

People have been discovering that more, and simpler, calculations seem to work better? the trend in AI workloads seems to have gone from FP32 -> FP16 -> INT16 -> INT8 and possibly even INT4?

Seems like just having lots of simple calculations is more efficient/effective than more complex stuff.

Well these chips perform analog math, which means high precision high speed. It’s not as accurate as fp32 as in repeatedly and deterministic outputs, but that’s def not a problem for a deep and wide neural network such as used by llm