I must confess to getting a little sick of seeing the endless stream of articles about this (along with the season finale of Succession and the debt ceiling), but what do you folks think? Is this something we should all be worrying about, or is it overblown?

EDIT: have a look at this: https://beehaw.org/post/422907

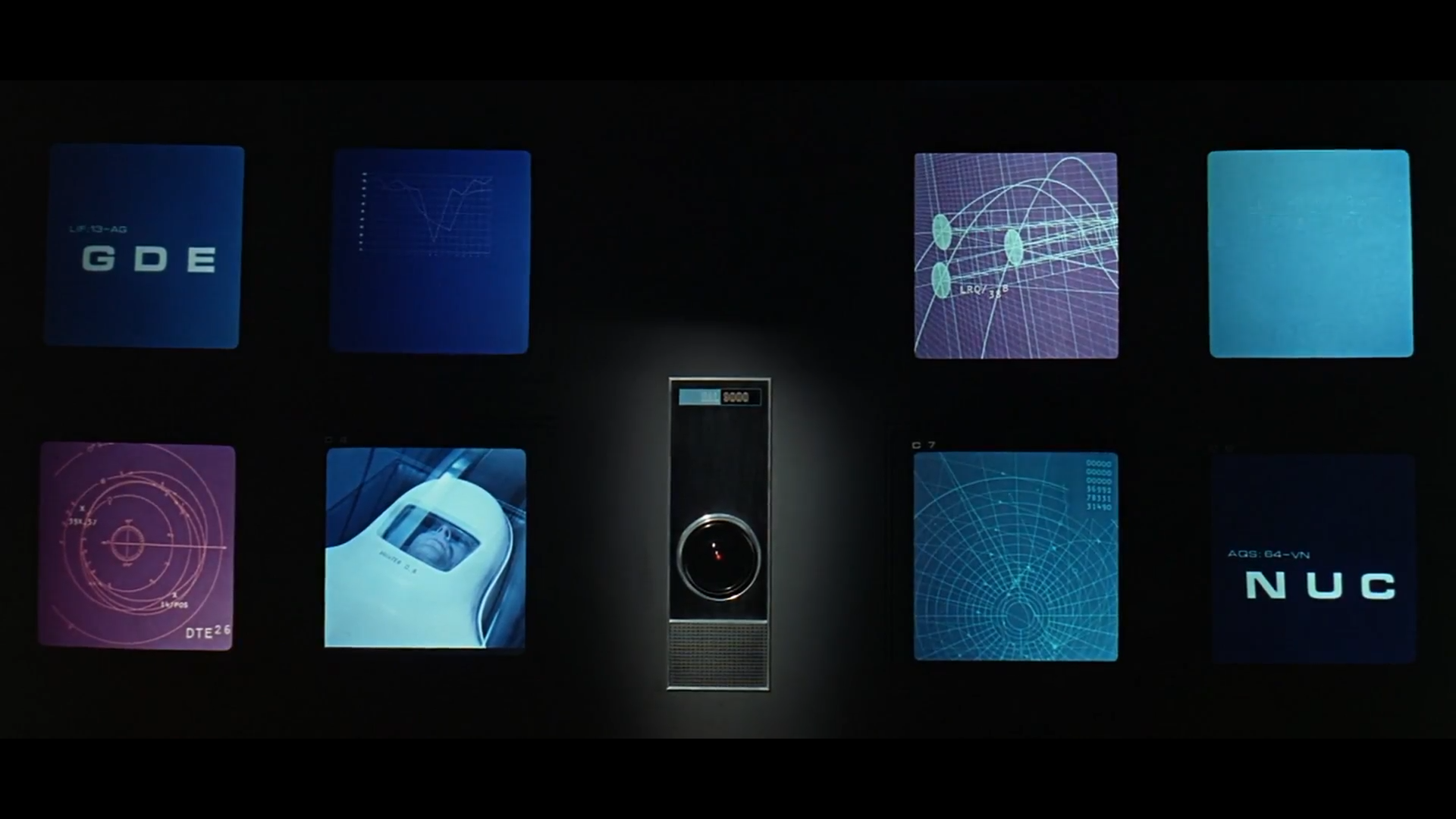

This video by supermarket-brand Thor should give you a much more grounded perspective. No, the chances of any of the LLMs turning into Skynet are astronomically low, probably zero. The AIs are not gonna lead us into a robot apocalypse. So, what are the REAL dangers here?

So no, we’re not gonna see Endos crushing skulls, but if measures aren’t taken we’re gonna see inequality go way, WAAAY worse very quickly all around the world.

Kyle Hill looks like if Thor and Aquaman had a baby. A very nerdy baby.

Hi @jherazob@beehaw.org, finally got around to watching the video, thanks for letting me know about it.👍 One thing that really befuddles me about AI is the fact that we don’t know how it gets from point A to point Z as Mr. Dudeguy mentioned in the video. Why on earth would anyone design something that way? And why can’t you just ask it, “ChatGPT, how did you reach that conclusion about X?” (Possibly a very dumb question, but anyway there it is 🤷).

We have designed it that way because it works better than anything else we’ve ever had, the kind of stuff you can achieve with these deep neural networks is astounding, but also stupidly limited. As to why you can’t ask ChatGPT: Because it doesn’t know. It doesn’t know ANYTHING. As mentioned in the comment, all it knows is how to sound right, it’s a language model, all it knows it’s language. It knows nothing about the internal workings of AI, because it knows nothing besides language.