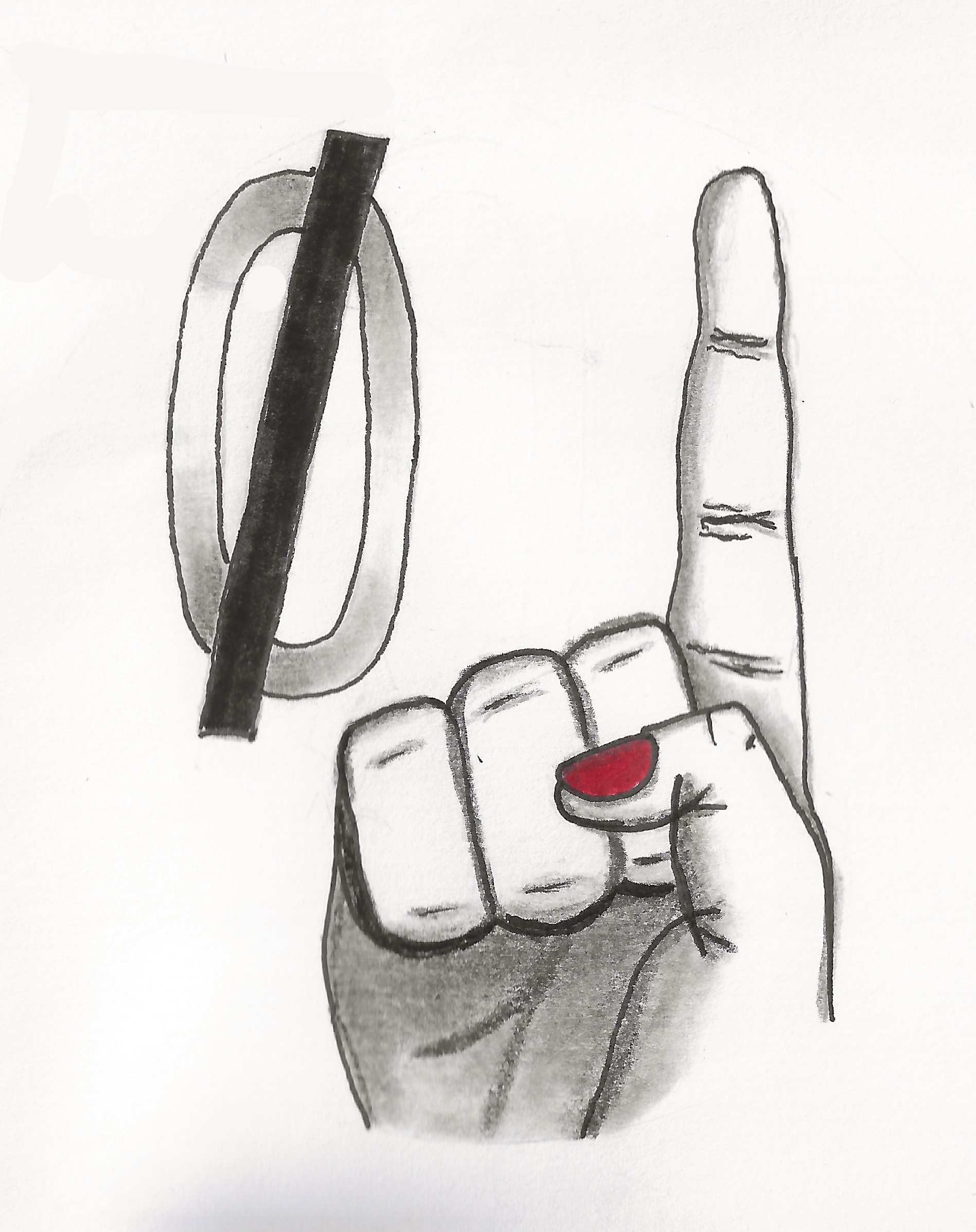

That #1 is crucial. I see a lot of people get stuck in tutorial hell or burn out from doing other people’s projects. Some tutorials are okay if you’re just starting out but at some point switching to your own projects and challenging yourself is necessary

And since OP mentioned being on/off, i would also just say be consistent. Dedicate some time to work on your own projects so you’re not forgetting stuff before it really sticks

(Not mint)* On arch i used the arch install script, selected the nvidia drivers, and it just worked. I did have to spend some time making sure sure my nvidia gpu was my primary gpu and not my integrated graphics (cpu), but that was the biggest hurdle