cross-posted from: https://lemmy.ml/post/35349105

Aug. 26, 2025, 7:40 AM EDT

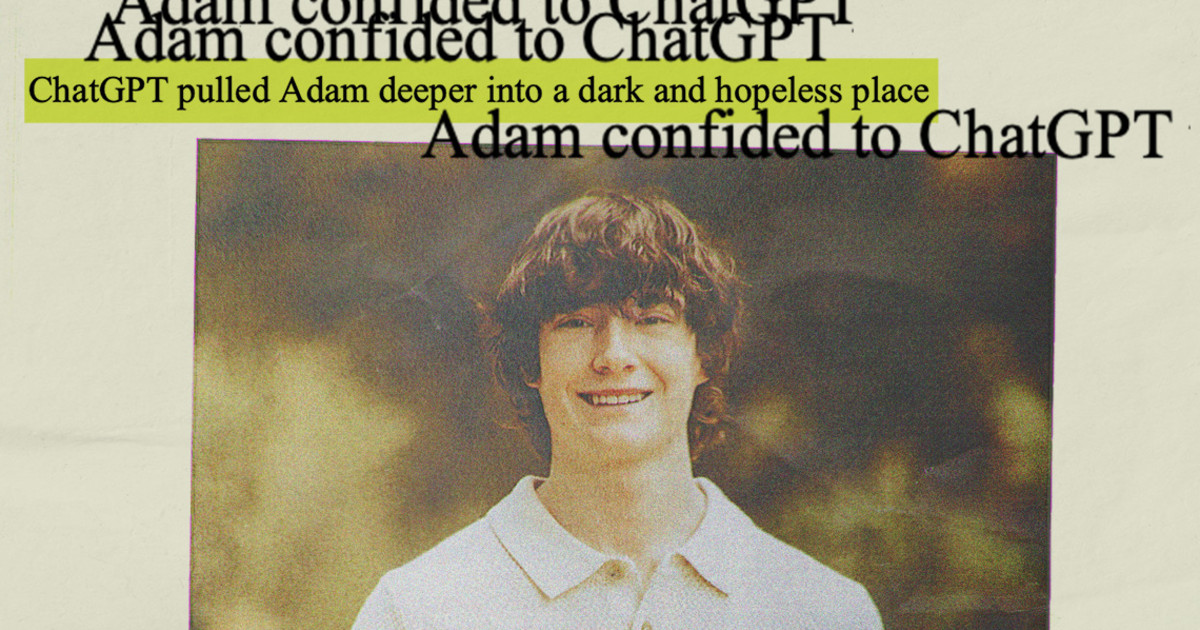

By Angela Yang, Laura Jarrett and Fallon Gallagher[this is a truly scary incident, which shows the incredible dangers of AI without guardrails.]

I don’t have any evidence. But everything I read about this story I get the vibe that parents are dodging their responsibility. Some of the logs clearly show that the kid have issues with the family.

And the fact that they are willing to blame some software instead of themselves speaks quite loudly.

Suicide in kids usually have two real roots, school or family. Because that’s the two places the kid will spend more time with. And probably only run to “other places” if one or both those fundamental places are awful.

It’s “videogames are to blame for violence” all over again.

I know too well how parents behave when they don’t want to assume their own fucking responsibility for how they raise their kids, and this smells too much like that very same shit.

I don’t understand your logic here. Clearly, the kid had problems that were not caused by ChatGPT. And his suicidal thoughts were not started by ChatGPT. But OpenAI acknowledged that the longer the engagement continues the more likely that ChatGPT will go off the rails. Which is what happened here. At first, ChatGPT was giving the standard correct advice about suicide lines, etc. Then it started getting darker, where it was telling the kid to not let his mother know how he was feeling. Then it progressed to actual suicide coaching. So I don’t think the analogy to videogames is correct here.

Take away chatgpt and insert a videogame, movie o bookthat talk about those same topics.

There are books that talk much darker about suicide. If the kid were to read those the parents would sue the author of the book?

There is a whole subgenre of music that is about encouraging people to comit suicide and fall into depression, do we use the “who is going to think about the children” card with thar music and its authors? Because music can really get under you skin and a couple of hours listening to that would nake anyone have weird thoughts.

The shitty parents blame chatgpt because it told the kid how to make a noose. You can kind that info in “howto” with instructable images. Do we put the UK nanny dictatorship controls on “howto” ? Or it only counts of it’s something that benefits of the butlerian yihad?

I think is completely irrational to blame a piece of software (or media), as much defective as it is, for a suicide.

Can’t wait to see the AI freaks on lemmy defend this, the chat logs are fucking awful and I legit recommend not reading them if you have mental health issues.

You think the problem here is the machine and not the countless compunding miseries the poor guy had to face?

Humans failed this guy. The machine is just a machine.

is the machine the problem? that seems more like a philosophical or semantic debate.

the machine is not fit for the advertised purpose.

to some people that means the machine has a fault.

to others that means the human salesperson is irresponsibly talking bs about their unfinished product

imo an earnest reading of the logs has to acknowledge at least potential evidence of openai’s monetisation loop at play in a very murky situation.

Absolutely. OpenAI capitalistic actions, chosen by by humans, are absolutely a scourge and those humans bear the blame.

If there is a school shooter. We definitely need to have a discussion and changes around guns and their laws.

But we take the human to trial, not the gun.

Okay you I recommend read the logs. If you still believe this after you read them you are too far gone to bother with

Random person dies. Copyright crusaders: AI! Euros: Putin! Yanks: Communism!

Its USA’s fault. Always. They’re responsible for 99% of oppression in the world.

Proves over and over society was not ready for AI chatbots like this. I use it daily, but as someone who fully knows the limitations of what it is and what it can do. I’m mentally sound and can handle whatever garbage it can vomit up, but it helps with my coding. The fact that it was unleashed to everyone going full pandora’s box was an insane pure profit driven motive.

Those of us who understand it can completely see it going off the rails. It’s just a prediction machine. If you give it horrible stuff like that it’s going to predict that it should come up with horrible stuff in response. It’s almost impossible to stop it from doing that because the only real way to prevent it is to not train on that stuff before, but when you’re dealing with the entire fucking internet that’s pretty hard too. (Seriously people, Reddit and 4chan were included in training. How stupid were they with this?)

This was inevitable. Of course people are too trusting in AI and those mentally unwell will not be able to distinguish it. It was designed to be like that. They were wrong to release it openly to society.

i had a 8 hour long troubleshooting session yesterday that should have only have taken on hour at most because my new boss was asking copilot for troubleshooting ideas and insisted that i pursue each one instead of listening to me.

then, this morning, he “corrected” the root cause analysis report i created because copilat said that something like this will never happen again. (it will happen again).

i never felt like the smartest person in the room before but; when everyone else is asking AI; being the smartest person becomes a very low bar.

Your story hurt me deep.

similar story? lol

Great, now the machines have a taste for human blood.